Excerpt:

“Even within the coding, it’s not working well,” said Smiley. “I’ll give you an example. Code can look right and pass the unit tests and still be wrong. The way you measure that is typically in benchmark tests. So a lot of these companies haven’t engaged in a proper feedback loop to see what the impact of AI coding is on the outcomes they care about. Lines of code, number of [pull requests], these are liabilities. These are not measures of engineering excellence.”

Measures of engineering excellence, said Smiley, include metrics like deployment frequency, lead time to production, change failure rate, mean time to restore, and incident severity. And we need a new set of metrics, he insists, to measure how AI affects engineering performance.

“We don’t know what those are yet,” he said.

One metric that might be helpful, he said, is measuring tokens burned to get to an approved pull request – a formally accepted change in software. That’s the kind of thing that needs to be assessed to determine whether AI helps an organization’s engineering practice.

To underscore the consequences of not having that kind of data, Smiley pointed to a recent attempt to rewrite SQLite in Rust using AI.

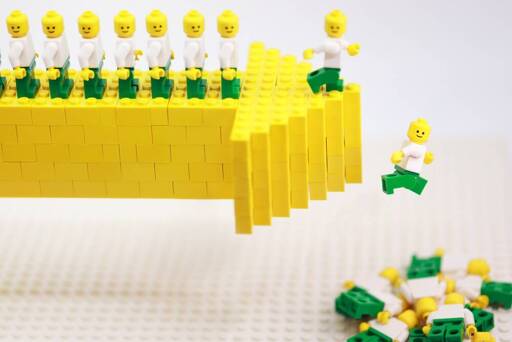

“It passed all the unit tests, the shape of the code looks right,” he said. It’s 3.7x more lines of code that performs 2,000 times worse than the actual SQLite. Two thousand times worse for a database is a non-viable product. It’s a dumpster fire. Throw it away. All that money you spent on it is worthless."

All the optimism about using AI for coding, Smiley argues, comes from measuring the wrong things.

“Coding works if you measure lines of code and pull requests,” he said. “Coding does not work if you measure quality and team performance. There’s no evidence to suggest that that’s moving in a positive direction.”

LLMs don’t need to be better. They just need to be more profitable. And wages are very expensive. Doesn’t matter if they lose a couple of customers when they can reduce cost.

It is all part of the enshittification of the company and for the enrichment of the shareholders.

Except LLMs aren’t profitable. They’re propped up by venture capital on the one hand and desperately integrated into systems with no case study on the effects on profit on the other. Video game CEOs are surprised and appalled when gamers turn against AI, implying they did literally no market research before investing billions.

When venture capital dries up and companies have to bear the full cost of LLMs themselves - or worse: if LLM companies go bankrupt and their API goes dead - any company that adopted LLMs into their workflow is going to suffer tremendously. Imagine if they fired half their employees because the LLM does that work and then the LLM stops working. So even if you could lose some money this quarter to invest in it and maybe gain some back by the end of this year, several years from now the company could be under existential threat.

And again, it can be acceptable to take this sort of risk if the technology is one you might at some point not be able to serve customers and business partners without. But LLMs and genAI are not that sort of technology. Maybe business partners will hate you if you don’t go along with the buzzword mania, but then you should fake it and allow it to cause as little damage as it can.

A company that adopts LLMs is not enshittifying, it is setting itself up to be a victim of LLM enshittification.

Shareholders would be richer in the short term if they didn’t waste money investing in LLM adoption, and much richer in the long term if they were one of the few companies that doesn’t go bankrupt when the LLM bubble pops.

The purpose of LLM adoption is to weaken the social-political position of workers, to create an extra rival to break their collective bargaining power even if it costs capital unfathomable amounts of money. Like when capitalists oppose universal basic income despite it massively increasing their profit margins if it were adopted because workers wouldn’t get sick as often, capitalists are fully capable of acting in solidarity with each other for purposes of class warfare, even if it comes at a personal loss.